Create a simple infrared webcam using a Raspberry Pi, Pi noir and Flask

Some months ago I bought a Rapspberry Pi B+ and a Pi noir sensor, with the idea of using it as a small server and take IR pictures.

Once the sensor is attached, is possible to take pictures with the raspistill command

raspistill -o picture.jpg

it has many parameters to regulate exposition, color and image format, but this usage is perfect for most cases.

Since the human eye cannot see within the infrared spectrum (above wavelengths of 750 nm), and consequently computers are not equipped to represent it, the camera has to remap colors to make room for it. The result is that infrared pictures have strange colors, in this case a yellow tone:

The color depends on the kind of room lighting, in this case a fluorescent light which should emit less IR light than an arc lamp.

The PiNoir does not see the full infrared range (which ranges from the 750 nm wavelength of the visible light to 1mm of the far infrared used by infrared telescopes), in fact it detects the near-infrared range (around 800 nm), so don’t expect anything like the Predator or Hollow man thermal vision, which is based on mid infrared, but it still produces something interesting when looking at plants:

Trees shot with the PiNoir sensor, without filters

The same street with the “real” colors (from Google StreetMap)

The trees and the bushes appear pink, while they are green, and looking at the green building in the corner is clear that is not just a mapping from green to pink. The camera is somehow giving a totally different color only to plants.

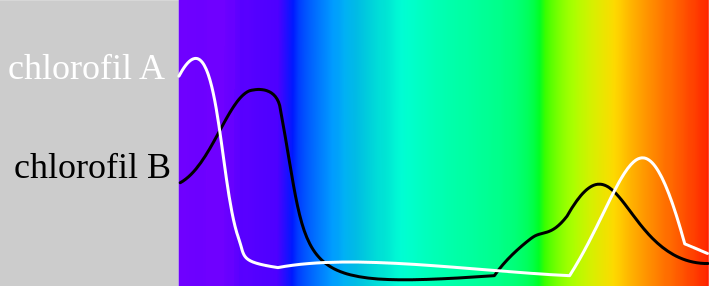

This happens because the plants chlorophyll absorbs a good amount of light in the near infrared range, unlike the paint used for the building.

Chlorophyll absorption over the visible light spectrum (click on the image for more details)

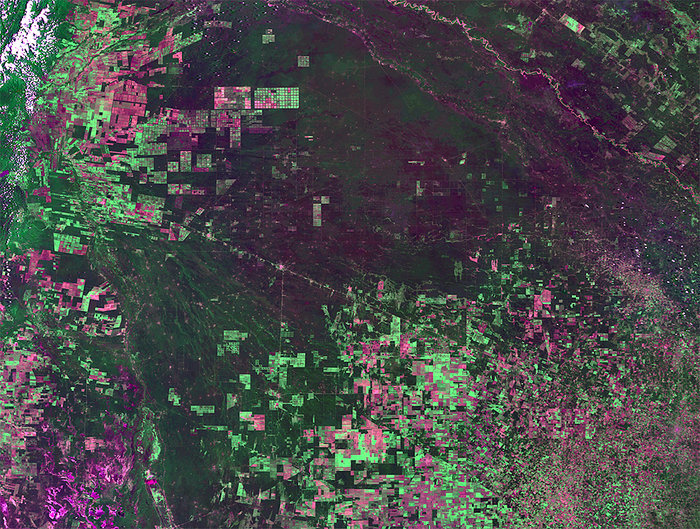

This phenomenon is used by both NASA and ESA to determine the health of the vegetation: deforested and dry areas appear of a different color in the near infrared, and different kind of plants have different spectral signatures.

False colour Proba-V image from 4 February 2014 showing deforestation in Argentina (Photo:ESA/VITO)

The PiNoir sensor is sold with a blue filter, a transparent blue plastic rectangle which filters colors and let us take pictures enhancing the IR spectrum, made specifically to inspect plants health.

The same picture above with the filter looks like this:

Trees shot with the PiNoir sensor, with the filter on

Now plants appear yellow, and using the Infragram tools we can make the vegetation areas brighter.

The picture taken with the filter on, manipulated by infragram

If to take pictures I can use SSH, to see them I need to attach the Raspberry to a TV or transfer them using SFTP (I tried to see them in the terminal using ASCII art, but it wasn’t that clear), both uncomfortable solutions.

With a friend we started to develop a small Flask application) to run the raspistill command and get the resulting image in a web page.

Flask is a Python framework which allows creating web applications by binding class methods to URL paths and populating Jinja templates to generate web pages.

Among the cool thing of Flask, is how easy it is to create simple API, in this case an API which just takes an infrared picture, saves it in a folder visible from the web and return a JSON response with the name of the file.

Using a small Javascript code is possible to invoke this API (actually, it’s one method) and show the picture in the browser. Since the process takes a few seconds, the user sees a text message with the status.

Once the image is loaded, it’s easy to call the “API” periodically, in order to take a picture and display it every 60 seconds, until the user closes the page. It supports multiple users (the camera will give an error if trying to take two simultaneous pictures, but this will not crash the application).

Now this camera can be used remotely from any browser, including the mobile ones.